At today’s Bondi AI Collective meetup at WOTSO Bondi Junction, we heard from Bondi Innovator Ben King, Founder of Aviato Consulting, which was recently named the #6 fastest growing startup in Australia. Ben shared fascinating insights into the journey and what he is seeing in the market.

Ben offered a grounded view of what enterprise AI adoption actually looks like in practice. The discussion moved well beyond generic AI enthusiasm into the realities of strategy, data, delivery, pricing, governance, talent, and scale.

Ben offered a grounded view of what enterprise AI adoption actually looks like in practice. The discussion moved well beyond generic AI enthusiasm into the realities of strategy, data, delivery, pricing, governance, talent, and scale.

What emerged was a picture of enterprise AI that is both more demanding and more promising than much of the public conversation suggests. The opportunity is real, but the organizations creating value are not simply deploying chat interfaces. They are making focused strategic choices, solving data fragmentation, building governance layers for agents, and redesigning how work gets done.

Focus is a growth strategy, not a limitation

One of the clearest themes was that Aviato’s growth has come from strategic discipline. Rather than trying to cover every cloud and every possible client need, the firm chose to focus only on Google Cloud and to target large enterprise customers.

That focus appears to have delivered multiple advantages at once:

-

simpler operations

-

deeper technical capability in one ecosystem

-

better use of engineering talent

-

stronger alignment with Google as a partner

-

clearer market positioning

A large share of deals comes through Google referrals, which highlights an important point for AI services firms. Growth is not only about technical competence. It is also about fitting tightly into an ecosystem that has incentives to bring the firm into opportunities.

There was also a strong commercial lesson in how engagements are structured. Traditional time-and-materials consulting was described as creating mistrust, because clients assume the incentive is to maximize hours. More compelling engagements are increasingly tied to business outcomes, whether that is reducing call-center escalations, speeding contract review, or accelerating modernization work. In AI, measurable value is often a far stronger selling point than the technology itself.

The data problem remains the foundation problem

A major part of the discussion focused on the gap between enterprise ambition and enterprise readiness. Many organizations want to move quickly on AI, but their underlying data environments are fragmented across multiple systems, functions, and SaaS platforms.

The challenge is not simply that data is messy. It is that organizations often lack any unified architecture that allows data to be queried, connected, and used consistently across the business. That makes real AI deployment difficult because the bottleneck is not the model, but the enterprise itself.

Examples were given of data being pulled from systems such as:

-

Workday

-

HubSpot

-

Salesforce

-

finance platforms

-

operational systems across different business units

The work of extracting, transforming, and consolidating that data into a usable environment was described as a long-term effort rather than a quick fix. That may not be the most glamorous side of AI adoption, but it is one of the most important. For many organizations, data unification is still the bridge between experimentation and actual enterprise value.

AI is both a cost story and a growth story

The discussion also explored the impact of AI on jobs, roles, and demand. Ben used Jevons paradox to frame the idea that when something becomes cheaper and more efficient, demand can increase rather than fall.

The ATM example came up as a useful analogy. Automation did not simply eliminate bank tellers. It changed the nature of branch work, reduced some costs, and helped enable a broader expansion of services.

That same logic may apply to AI. In some cases, especially with repetitive and outsourced tasks, there will clearly be displacement. But the broader effect may be more complex:

-

some work disappears

-

some work shifts to higher-value activities

-

some organizations use AI to work through backlogs faster

-

lower costs make previously uneconomic services viable

-

new categories of demand begin to emerge

This is particularly important in areas such as legal services, support functions, and knowledge work. If AI changes the cost structure enough, the result is not just cheaper delivery of existing work. It can also be entirely new offerings at new price points for new customers.

So the impact is unlikely to be uniform. The discussion suggested that the real question is not whether AI cuts jobs or creates them in the abstract, but how it reshapes the value chain, where roles move, and what new demand becomes possible.

The real frontier is not prompts, but enterprise AI systems

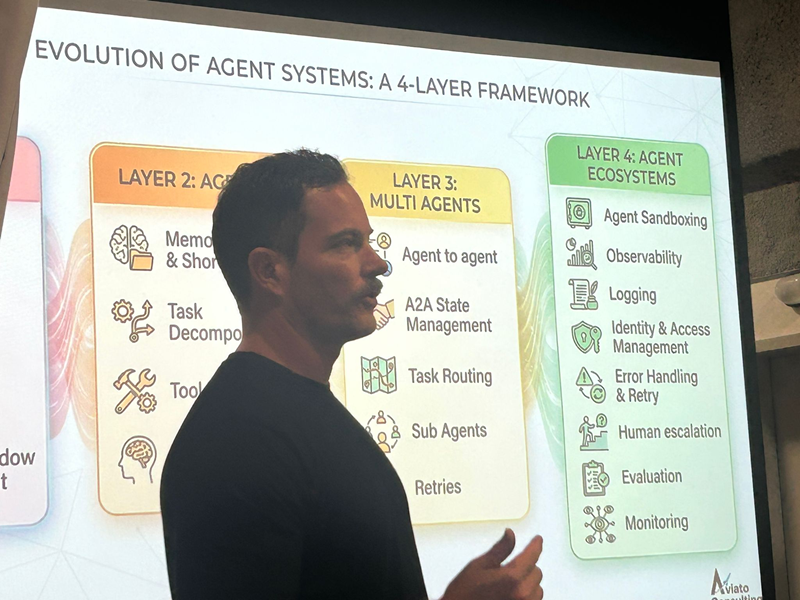

The most substantial part of the session focused on where enterprise AI is actually heading. The core argument was that many organizations are still focused on the visible and relatively simple layers of AI adoption, while the real complexity lies in the next stage.

A rough progression was described from:

-

prompting and basic AI usage

-

tool use and workflow integration

-

agents with memory

-

orchestrated or multi-agent systems

-

enterprise-grade management and governance layers

This is where the discussion became especially rich. The challenge is no longer just getting one agent to perform one useful task. The challenge is building an environment in which many agents can operate safely, reliably, and economically across the enterprise.

That requires capabilities such as:

-

memory and context handling

-

tool access management

-

orchestration between agents

-

retries and failure handling

-

sandboxed execution

-

observability and logging

-

human escalation paths

-

governance controls

-

evaluation frameworks for changes over time

The point was not that every company needs all of this immediately. It was that once organizations move beyond a proof of concept and begin putting multiple agents into production, these requirements emerge very quickly. A single working agent can create the illusion that the hard part is over. In reality, the hard part often begins once that agent becomes one of many.

One of the strongest insights in this section was around evaluation. New models and model versions are constantly released, and teams often upgrade because the latest model appears better in demos or on benchmarks. But a newer or more expensive model is not automatically better for a specific enterprise use case.

That is why evaluation was described as one of the least discussed but most important disciplines in enterprise AI. Serious organizations need to be able to test whether a change in model, prompt, workflow, or configuration improves the actual business task being performed. Without that, production AI systems are being changed based on instinct rather than evidence.

This in turn points to where a great deal of future consulting value may lie. The agent for a legal process or a customer service workflow may be specific, but the reusable infrastructure around those agents is not. Once an organization has a robust layer for governance, evaluation, access control, logging, and escalation, it can roll out additional AI workflows much faster.

That feels like one of the key shifts underway now: a move from isolated AI experiments to enterprise operating layers for AI.

The use cases are real and increasingly operational

The examples discussed were notable because they were not futuristic. They were practical and already underway.

Use cases mentioned included:

-

legal contract review

-

customer service and escalation reduction

-

marketing and content workflows

-

data querying across enterprise systems

-

branding consistency

-

legacy modernization

-

coding and engineering pipelines

The contract review example was especially revealing. Large organizations often have slow, expensive legal workflows, with backlogs that hold up the business. That creates a very clear case for AI support.

Another strong example was COBOL modernization. Rather than simply translating old code directly into a new language, the process described was more structured:

-

generate tests for the original system

-

create a design specification from the legacy code

-

use that specification to generate the new code

-

validate the new code against the original behavior

-

integrate security and delivery steps into the pipeline

The important lesson was that effective AI workflows are often not direct substitutions. They are redesigned processes. The gains come not just from model capability, but from structuring the workflow in a way that makes AI reliable and useful.

In AI services, sales and delivery matter as much as technology

Another valuable part of the conversation was the candor about what actually builds an AI services business. The hardest part was not described as the technology. It was sales, trust, and delivery.

Early growth came through highly personalized outreach, relationship-building, and then increasingly through referrals and reputation. That is a useful counterweight to the idea that AI businesses can grow mainly through automated outreach and content.

At the same time, a referral-driven business model raises the bar on execution. If much of the pipeline comes through ecosystem trust and partner introductions, poor delivery has outsized consequences. That is why structured implementation practices, clear updates, and customer satisfaction tracking were emphasized. Reliable execution is not an operational detail. It is part of the growth engine.

Internal leverage, offshore capability, and the next phase of growth

The session also highlighted how AI is being used internally inside the firm itself. AI is helping keep overhead lean, automate internal work, and make better use of bench time. Rather than leaving people idle between projects, internal projects are used to build tools, develop products, and train engineers in emerging AI delivery capabilities.

That creates several forms of leverage at once:

-

improved utilization

-

faster learning cycles

-

internal productivity gains

-

potential future products

-

stronger delivery capability for clients

A significant offshore operating model, especially in India, was another part of that leverage. This was presented not just as a labor arbitrage play, but as a way to build a cost structure that supports growth while maintaining customer-facing capability where needed. There was also frank acknowledgment that getting this right involves substantial operational complexity.

Alongside the services business, there is also movement toward productization. Internal tools built for delivery management, security scanning, and workflow acceleration may eventually become products in their own right. That said, the immediate constraint does not appear to be market demand. It is talent.

The biggest challenge ahead was described as finding people who combine strong technical ability with the ability to work effectively with enterprise customers. That may be one of the defining constraints for the next wave of AI services firms.

The larger lesson

The broader lesson from the session was that real enterprise AI adoption is becoming more systemic. The first wave was about experimentation, prompting, and isolated use cases. The next wave is about architecture, evaluation, governance, orchestration, and scale.

That is a different level of maturity. It requires not just clever use of models, but disciplined operating systems for how AI is embedded into the enterprise.

The organizations that create the most value from AI are unlikely to be those with the most demos or the most pilots. They are likely to be those that can connect fragmented data, redesign workflows, manage many agents safely, evaluate changes rigorously, and build the internal capability to scale all of that over time.

That is where enterprise AI is heading.